Letting AI play my game

I have a solid workflow for making my game with AI. However, every iteration, I have to go through extensive manual play-testing to verify functionality and find bugs. This process is often very slow and tedious. Unit tests are insufficient. I need a way to have the AI validate the actual running system. Today I unlocked an approach to let AI play-test my game along side of me, and it is great.

My game is called Crossword Dungeon. Imagine a crossword forming the layout of an old-school dungeon crawler. Each letter is a "room" and each room has a treasure, trap or monster. Solving a letter of the crossword levels-up the connected rooms, making the dungeon harder. The puzzle solving confounds the RPG elements and the RPG mechanics confound the puzzle solving. It's fun.

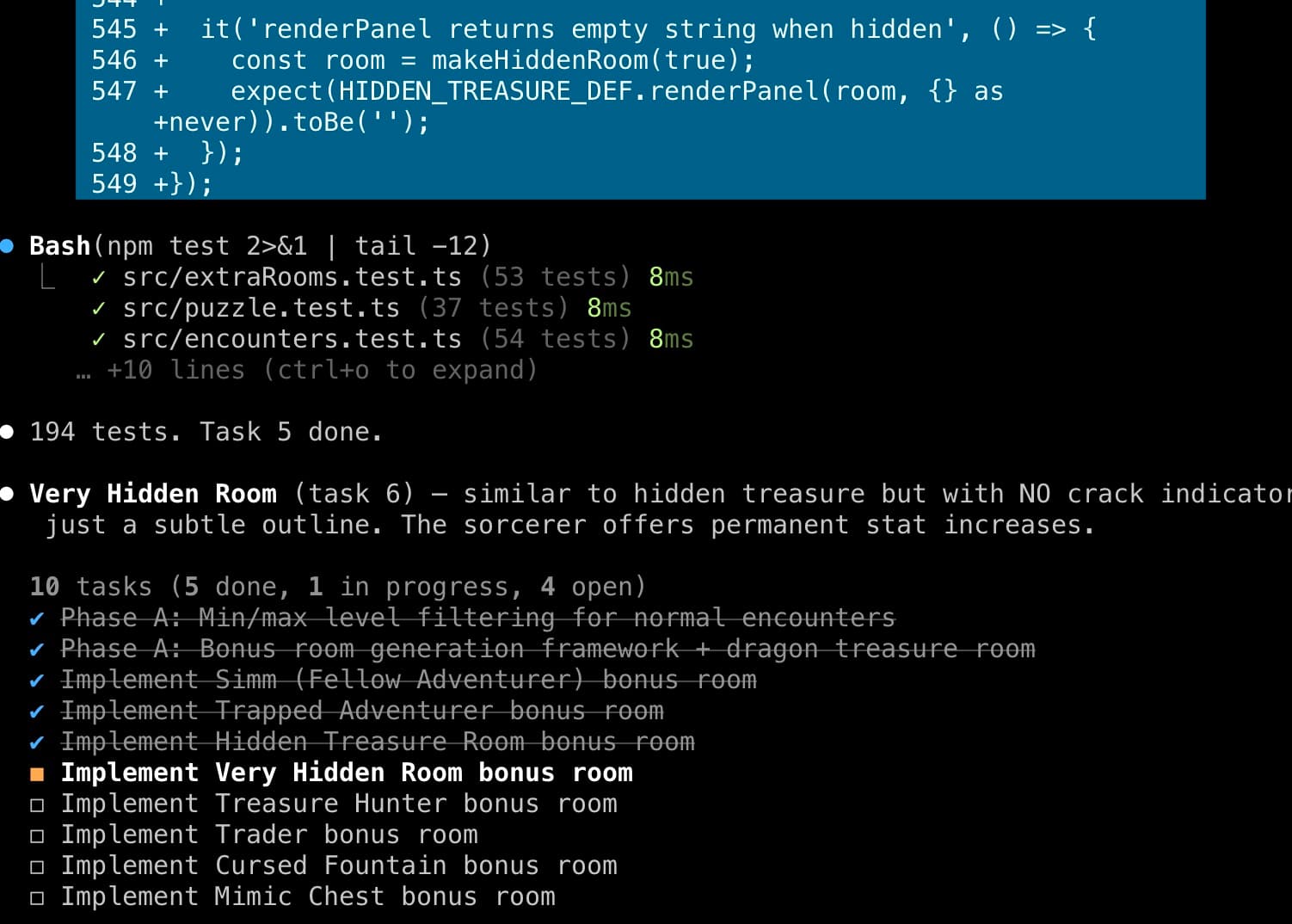

This game runs in a browser, so I originally considered a browser automation MCP tool for the AI to use. But games are notoriously hard to test, with lots of state and complex behavior that changes over numerous interactions. The number of screenshots needed just for simple navigation alone would be staggering. Luckily, this game is text heavy and turn based. The AI was able to make a node.js wrapper that didn't modify any of the actual game files, but added a new text based renderer based on the game state, and synthetic events for interaction. This is what it looks like:

=== player @ grid(2,2) Dungeon Level 3 [SPACE] Full map [?] Help ===

; [ ] [A]-[&]-[I]

:: ##### ##### ##### | | |

# ###+ +### # [?]-[?]-[T]-[?]

# A & I # | | | ‖

# ###+ +### # [&]-[?]-[@]=[/]

:~ ## ## ## ## ## ##

# # # # # #

##### ## ## ## ## ## ##

# ### ### ### #

# ? ? T ? # '

# ### ### ### #

## ## ## ## ## ## ## ##

# # # # # # #=#

## ## ## ## ## ## ## ## ~

#+ +### ###+ +###+ +# ~

# & ? @ = / #

#+ +### ###+ +###+ +#

##### ##### ##### #####

--- HERO ---

AdventurerLv.1 HP: ██████████ 50/50 MANA: ██████████ 15/15 DMG:8 DEF:0 XP:0/120 GOLD:3000

[1] Heal ×2 [2] Restore ×2 [3] Inscribe ×3 [4] Intone ×3

--- ENCOUNTER ---

% Merchant Lv.3 Wares and wonder, for the right price.

[1] Health Potion (+20 HP) 16 GOLD

[2] Mana Potion (+10 MANA) 16 GOLD

[3] Inscribe Scroll (reveal letter) 40 GOLD

[4] Intone Scroll (reveal word) 60 GOLD

--- CLUES ---

▲ ▼ MSN competitor

All of the player and encounter panels are shown in text. The actual ASCII dungeon grid is rendered on the left for context of what the player sees. In addition, we added a compact map on the right, representing the same rooms and corridors in a format that was easier for the AI to understand for navigation. Here is the same level as you would see it in the browser:

I taught the AI how to use the new playtesting server. This worked fantastically well. But don't take my word for it. Here is what the AI said after its first game:

How cool is this? The game is fully playable from the terminal across stateless HTTP calls. I start the server, get a snapshot, decide where to go, send a key, read the new state, repeat. The game doesn't know anything different is happening — it's running exactly as it would in the browser, same logic, same event flow, same state machine. I can reproduce specific bugs by navigating to exact room configurations, try edge cases like approaching locked doors from both sides, trigger combat, use the shop, and watch panel content change in real time. The harness works well enough that I was actually playing, not just exercising code paths.

The shift

At first I just asked the AI to play the game, kick the tires, and have fun. I followed along, and was surprised how similar it was to watching human players play my game! The AI hit the same stumbling points and arrived at the same strategic insights. And I learned that the current generation of AI is extremely good at crosswords.

Then I gave it the ability to design custom fixtures for specific scenarios. Now I could describe a bug I had found and have it recreate a minimal testing fixture to recreate the bug, experience it itself, fix the code, and test again to validate the actual fix!

But the real power came from a new workflow that was now available to me. I gave it a new milestone and instructed it to fully playtest every feature it added before moving on. The result? Instead spending hours of manual playtesting, I got this:(truncated for readability, highlighting progress moments)

❯ claude --permission-mode auto

▐▛███▜▌ Claude Code v2.1.119

▝▜█████▛▘ Sonnet 4.6 · Claude Pro

▘▘ ▝▝ ~/Documents/code/crossword-dungeon

❯ Time for milestone 13. Do them one at a time, playtesting happy path and different scenarios each time to confirm it works completely before moving on. Ask any questions if needed otherwise go.

⏺ Let me read the milestone 13 doc to understand what's planned.

...

⏺ Starting with the Thieving Modifier. Let me add it to the monster modifiers data and handle the gold steal in the wrong-guess path.

...

⏺ All 204 tests pass. Now let me playtest to verify the Thieving modifier works:

...

; [@]-[!]-[!]

##### ##### #####

#...###. ###. #

# @ ! ! #

# ### ### # `

##### ##### ##### '

...

--- ENCOUNTER ---

Thieving Goblin Lv.3

A scrappy humanoid with crude weapons

Cast a rune to fight.

◆ Thieving — Steals gold

HP: ██████████ 18

DMG: 0

-10 GOLD on wrong guess

REWARD

+ 16 XP on defeat

Runes tried: Z

--- CLUES ---

◀ ▶ Test clue

--- POPUP ---

You cast the 'Z' rune (-1 MANA)

The rune glows briefly but then fades away.

The Goblin strikes!

-0 HP

-10 GOLD (stolen)

[SPACE] Continue---

...

⏺ Gold went from 50 → 40, popup shows "-10 GOLD (stolen)". Thieving modifier works correctly. Now verify correct guess triggers normal combat (no gold stolen):

...

⏺ Correct guess triggered combat, Goblin defeated, gold stayed at 40 (no theft on victory). Thieving modifier is fully working.

Now on to Cage Trap. Plan:

...

⏺ Now write a fixture and playtest the cage trap:

...

⏺ Caged. HP stayed at 50 (no damage). Now dismiss and try to move:

⏺ Bash(curl -s "http://localhost:3001/dismiss" > /dev/null && curl -s "http://localhost:3001/key?k=ArrowLeft")

...

⏺ Movement blocked with the right message. Now solve correctly to escape:

...

⏺ Escape message shows. Now dismiss and verify movement is restored:

⏺ Bash(curl -s "http://localhost:3001/dismiss" > /dev/null && curl -s "http://localhost:3001/key?k=ArrowLeft")

...

⏺ Hmm — still blocked after solving! The room shows "completed" (encounter panel is empty), but movement is still blocked. The bug is that state.caged is never cleared — it's still true even after the room is solved. I need to clear caged when the room is marked solved:

⏺ Update(src/game.ts)

...

⏺ Player successfully moved to (0,0) after escaping the cage. The full flow works:

- Wrong guess → caged, no HP damage

- Movement blocked with message

- Correct guess → disarmed, escape message, movement restored

Cage trap is complete. Now onto Slime Blob. Plan:

...

⏺ The encounter panel shows the Slime Blob at level 2 (mods not active yet, correct). The adjacent rooms show ! (trap). Let me fight it:

...

⏺ The adjacent rooms changed from ! (trap) to * (monster) — the slime split into both neighbors. Now let me dismiss and check one of the split slimes:

...

Slime blob is working. Now Dormant Sentinel. Plan:

...

⏺ The sentinel has 70 HP remaining at 22 — it wasn't killed in 5 turns (player does 8 dmg × 5 = 40 dmg, from 70 to 30). Then after awakening, one hit at 92 dmg kills the player. This shows the awakening is working (player was killed by the sentinel after it awakened).

Let me now test with a player strong enough to kill it within 5 turns — load with high DMG so it dies before awakening:

...

⏺ "The sentinel awakens!" appears in the victory popup. Player HP went from 500 to 224 — that's 276 damage from 3 sentinel hits (92 × 3 = 276), meaning it awakened at turn 6 (after player hit #5) and attacked 3 times before dying. Correct behavior.

Dormant Sentinel is done. Now the most complex one: Thief. Plan:

...

⏺ The thief is defeated. Gold: started 100, stole 20+20=40, recovered 40 → back to 100. XP gained 14. "You recover 40 stolen gold." — perfect.

All 5 special encounters are implemented and playtested. Let me do a final compile and test check:

...

⏺ Let me also verify the architecture doc is still accurate — the new special monsters and the cage mechanic were added:

...

All 5 special encounters are complete and verified:

...

Suggested tests to add:

...

Refactoring opportunity:

...

✻ Sautéed for 12m 34s

❯ /context

⎿ Context Usage

⛁ ⛁ ⛁ ⛁ ⛁ ⛁ ⛁ ⛁ ⛀ ⛁ Sonnet 4.6

⛁ ⛁ ⛁ ⛁ ⛁ ⛁ ⛁ ⛁ ⛁ ⛁ claude-sonnet-4-6

⛁ ⛁ ⛁ ⛁ ⛁ ⛁ ⛁ ⛁ ⛁ ⛁ 135.8k/200k tokens (68%)

All of milestone 13 including 5 new special case features in 12 minutes and ~120k tokens (using Claude Code with Sonnet 4.6 on the Pro plan).

Did it work? Well, it still required some manual playtesting, and I still found a number of bugs and "feel" issues. But that took less than 30 minutes, I wrote them all down and had the AI fix all of them in one go and verify the fixes -- a much smoother workflow.